aNewDomain.net — Facebook knows more about you than the NSA. It may even know what you were thinking, especially if you deleted a last-minute post or comment. Yes, it wants to know what it missed while you were pondering life, friends or that BuzzFeed list.

Facebook today announced Trending, a new section in the top-right corner of the News Feed that highlights TV shows, events and articles that are being talked about by its users. Stories that appear inside Trending are picked by algorithms that identify spiking keywords on the social network and then find associated articles to link to. Facebook says that these articles will come from public Facebook Pages and public figures, but also from Pages you follow and from posts by friends.

Self-censorship

A recent paper, Self-Censorship on Facebook, shows that Facebook wants to know why users might abort a post at the last minute. They were able to collect data from 3.9 million users over 17 days to find out. As noted by Ars Technica, this is the social media equivalent of online retailers finding out why people abandon online shopping carts before checkout.

According to the paper, the study tracked the habits of these 3.9 million Facebook users. Adam Kramer, a data scientist employed by the social network and lead author of the paper, analyzed the hard data of those 3.9 million users. Kramer viewed activity on each profile by monitoring its HTML form element, which is made up of HTML code that changes whenever a user types in their Facebook chat, status update or any areas where they interact with others.

While Facebook claims it did not (and does not) track the words that are written in each box, the company is able to determine when characters are typed, how many words are typed and whether they are posted or deleted. Kramer, with help from student Sauvik Das, spent 17 days tracking “aborted status updates, posts on other people’s timelines, and comments on other posts.”

Decisions to self-censor appeared to be driven by two principles: people censor more when their audience is harder to define, and people censor more when the relevance of the communication ‘space’ is narrower. In other words, while posts directed at vague audiences (e.g. status updates) are censored more, so are posts directed at specifically defined targets (e.g. group posts), because it is easier to doubt the relevance of content directed at the focused audiences.”

Surprisingly, however, we found that relative rates of self-censorship were quite high: 33 percent of all potential posts written by our sample users were censored, and 13 percent of all comments, they concluded.

Big Data Abuse

It is clear that the public does not understand the social, ethical, legal and policy issues that underpin the big data phenomenon.

Image credit: J Bizzie/Flickr

Below is Sean Rintel’s cogent argument against Facebook’s self-censorship. Sean is a communication lecturer from Australia:

Facebook is arguably denying users the right to informational self-determination in terms of their informed consent to participating in the research and by storing this data longer than needed for immediate technical purposes. Does this kind of study violate user privacy? The authors claim not.

These analyses were conducted in an anonymous manner, so researchers were not privy to any specific user’s activity … the content of self-censored posts and comments was not sent back to Facebook’s servers.”

Rintel continues:

When I contacted the researchers to clarify what was sent, Facebook communications manager Matt Steinfeld responded that the authors saw only a ‘binary value.’ ‘We didn’t track how long the post was … we only looked at instances where at least five characters were entered, to mitigate noise in our data,’ he said. ‘Nevertheless, self-censored posts of 5 characters or 300 characters were treated the same as part of this research.’ Steinfeld also asserted that Facebook’s Data Use Policy, which is available to users before they join Facebook (and remains available), constituted informed consent.

Facebook’s Data Use Policy on ‘Information we receive and how it is used‘ includes a section on data such as that collected for the study:

We receive data about you whenever you use or are running Facebook, such as when you look at another person’s timeline, send or receive a message, search for a friend or a Page, click on, view or otherwise interact with things, use a Facebook mobile app, or make purchases through Facebook.

In the “How we use the information we receive” section the last bullet point includes uses such as: internal operations, including troubleshooting, data analysis, testing, research and service improvement. This is at best a form of passive informed consent. The MIT Technology Review proposes that consent should be both active and real-time in the age of big data.”

For the full article click here.

As we move into the next stage of big data marketing, you would be better off writing your thoughts with pen and paper before typing anything. Just to be sure that what you were thinking is actually private.

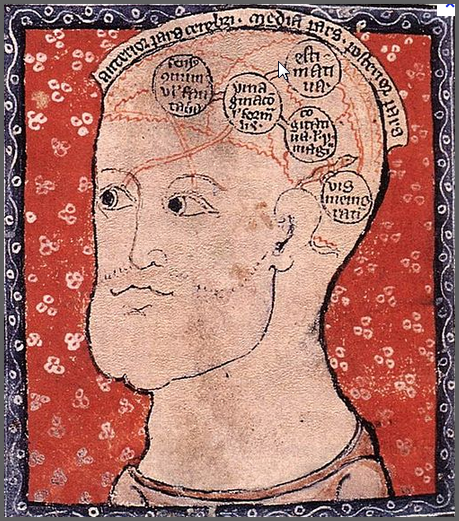

Photo credit: Wikimedia

Do you know what Zuckerberg is thinking? Clearly not about you … or your privacy. He thinks that privacy is a dated concept. Except, of course, when the “NSA blows it.” The next new Facebook app will be trending thoughts … which will be made with a predicative tool. Here is the result:

Following poster credit: UKIP party UK

For aNewDomain.net, I’m David Michaelis.

Based in Australia, David Michaelis is a world-renowned international journalist and founder of Link Tv. At aNewDomain.net, he covers the global beat, focusing on politics and other international topics of note for our readers in a variety of forums. Email him atDavidMc@aNewDomain.net.