aNewDomain –The White House isn’t too worried about artificial intelligence wiping out the human race, as British physicist Stephen Hawking, Tesla chief Elon Musk and even Microsoft co-founder Bill Gates have warned in recent years.

That’s just one takeaway from a new 48-page report White House AI report it released to policy makers this week, according to Jim Hendler, AI expert, researcher and coauthor of the new book, Social Machines: The Coming Collision of Artificial Intelligence, Social Networking and Humanity.

Addressing such concerns, the report says that fears about super-intelligent and evil computers, shouldn’t have much impact on current US policy toward AI. “And it gets that part right … we all need to cut past the hype and look at what’s really on in AI,” he said, “so we can pay attention to the real risks and opposed to the science fiction risks,” he said.

Addressing such concerns, the report says that fears about super-intelligent and evil computers, shouldn’t have much impact on current US policy toward AI. “And it gets that part right … we all need to cut past the hype and look at what’s really on in AI,” he said, “so we can pay attention to the real risks and opposed to the science fiction risks,” he said.

Hendler deconstructed the government’s first ever AI policy report in an exclusive interview with aNewDomain today.

Read the report in full below the fold. -Ed.

The things to worry about aren’t the frightening HAL 9000 or Skynet scenarios, Hendler says, “but the very real economic, societal ethical and safety concerns AI poses for the foreseeable future.”

The things to worry about aren’t the frightening HAL 9000 or Skynet scenarios, Hendler says, “but the very real economic, societal ethical and safety concerns AI poses for the foreseeable future.”

The report does a fair job of addressing such issues overall, he says, “and it is good as far as it goes,” Hendler says.

But the report is not sufficiently detailed in a way that can be effective for policy makers and American citizens “who better understand the underlying technical issues and tradeoffs behind AI, its economic drivers and the real challenges and risks it poses.”

It also “punts,” he says, in two areas: AI’s role in the military and on its potential economic ramifications.

AI and weapons systems

“AI weaponization is an issue we should think through, and not in the ‘weapons gone wrong’ and becoming Skynet sense,” says Hendler. “What needs to be thought through are protection mechanisms and how safety  mechanisms can be built into the systems from the very beginning.”

mechanisms can be built into the systems from the very beginning.”

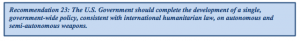

The report does discuss Lethal Autonomous Weapons Systems (LAW), he says, “but it needs to push harder in areas like safety and controls” that secure systems from accidents, hacking and use by terrorists and other bad actors.

“Over the past several years … issues concerning the development of so-called “Lethal Autonomous Weapon Systems” (LAWS) have been raised by technical experts (and) ethicists. The US … anticipates continued robust international discussion of these potential weapon systems going forward.”

Most importantly, “if we are going to deploy a war using (AI based weapons and drones), there needs to be measures to ensure humans are in the loop — as they should be for all things that have high consequences,” Hendler adds.

Trusting AI to the point where humans leave the decision making scene entirely is would be disastrous — on the military stage and in every other critical sector.

AI, automation and the economy

The report says a separate study is coming in a few months, but “if it only addresses the issues of how AI stands to affect the jobs of the nation’s blue collar workers, it will miss the boat,” says Hendler.

It’s unfortunate, he adds, that the White House AI report doesn’t cover a lot about the impact of AI — and it doesn’t address some of the places where the economic impact may be greatest.”

It’s unfortunate, he adds, that the White House AI report doesn’t cover a lot about the impact of AI — and it doesn’t address some of the places where the economic impact may be greatest.”

The report is correct in pointing out that autonomy and automation will threaten the workforce and require wholesale changes there, but the report is lacking in that it doesn’t also consider “the impact of cognitive assistants on the more educated workforce,” he said.

“One important concern arising from prior waves of automation … is the potential impact on certain types of jobs and sectors, and the resulting impacts on income inequality,” says the report. “Because AI has the potential to eliminate or drive down wages of some jobs, especially low- and medium-skill jobs, policy interventions will likely be needed to ensure that AI’s economic benefits are broadly shared …”

Just think of all the skilled jobs that will be edged out by smart systems, he says, using legal clerks, support staff and paralegals at a big law firm. Currently, Hendler says, you might have eight support staff for one lawyer. But one smart systems can duplicate their jobs, “the whole pyramid gets upended and you end up with the reverse, eight lawyers for every (AI-based legal researcher).

The paper doesn’t discuss this at all, but it should. From profession to profession, the whole system and the whole, model is going to have to be rethought,” he said.

Workforce development and diversity

The report digs deep in terms of training and diversity, outlining concerns that echo Bloomberg in a recent editorial that AI so far is just “a sea of dudes.”

The report digs deep in terms of training and diversity, outlining concerns that echo Bloomberg in a recent editorial that AI so far is just “a sea of dudes.”

One thing not recommended in the report, says Hendler, is an increasing emphasis on strong female and minority role models in the kinds of movies and TV shows that historically have inspired the young to pursue computer science careers.

Historically, “most science fiction has been aimed at adolescent males, the ones who might become computer scientists and AI scientists,” says Hendler, pointing out that in the movie, “2001: A Space Odyssey,” there were no female characters to speak of.

“Some of the newer movies now are featuring female voices and characters – but they still don’t really have strong female characters,” he added, “in fact, movies like “Her” and “Ex Machina” are kind of the opposite.”

“The lack of gender and racial diversity in the AI workforce mirrors the lack of diversity in the technology industry and the field of computer science generally. Unlocking the full potential of the American people, especially in STEM fields, in entrepreneurship, and in the technology industry, is a priority …”

Accountability

This is a large part of the report, but it doesn’t dive too deeply enough into what Hendler feels is the main risk of AI moving forward: That humans will rely too much on the systems and eventually cede control of critical decisions to them.

Consider the Russian who saved the world, says Hendler, the USSR lieutenant, Stanislav Petrov. He famously saved the world from nuclear war 33 years ago when he chose to ignore computers that informed him a US nuclear missile was on its way. Petrov didn’t believe the warning, however, and chose not to inform high Russian command about the alarm, which would have caused the USSR to strike the US and launch full-out nuclear war in so doing.

“Petrov made his decision by taking into account the complex context around him. He was trained in a specific context — his machine and the lights — but when things went down, he looked beyond that context and reasoned that it didn’t make sense. And he took appropriate action,” Hendler said.

That’s Hendler’s big worry about the future of AI, one he urges governments to think deeply about. “As AI gets smarter, at some point some people are going to want to remove the human from the loop,” he said.

Removing humans from key warfare decisions is already a topic of discussion around drone and cyberwarfare, but that’s dangerous. With AI, he said, the real “issue is having someone (i.e., a human) with intuition being somewhere in the loop before the missiles get launched.”

For more on these issues, check out the book Hendler coauthored with Alice Mulvehill, Social Machines: The Coming Collision of Artificial Intelligence, Social Networking and Humanity (Springer, 2016). It’s available now.

White House AI Report 2016 by Gina Smith on Scribd

For aNewDomain, I’m Gina Smith.

White House AI image: Futurism.com, All Rights Reserved. HAL 9000 image: Via YouTube, All Rights Reserved;

Here’s Hendler talking at TED in April 2015

Below, watch Barack Obama at he Frontiers Conference in Pittsburgh.

Here’s a movie clip of Stanley Kubrick’s 2001: A Space Odyssey